Presentation on 1 November 2021 at the annual meeting of the Danish Gymnasiernes, Akademiernes og Erhvervsskolernes Biblioteksforening, GAEB in Vejle, Denmark.

I had the pleasure of giving a series of lectures on algorithms for information search on the internet to the association of Danish librarians. This is a highly educated crowd with a lot of background knowledge, so we could fast-forward through some of the basic stuff.

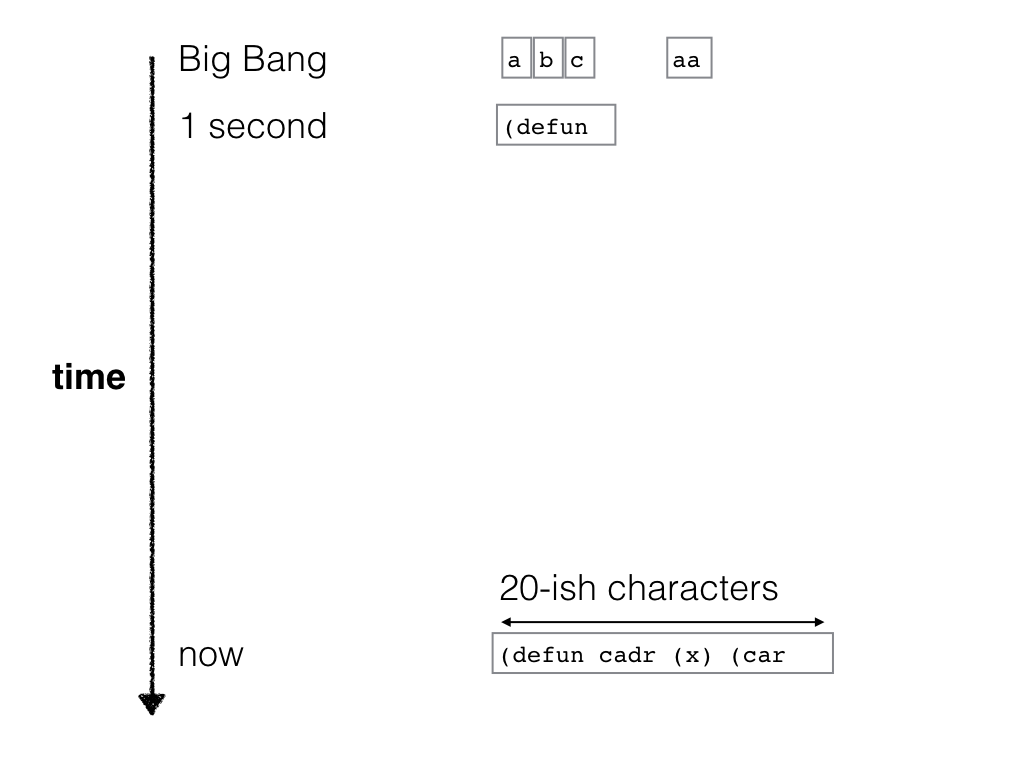

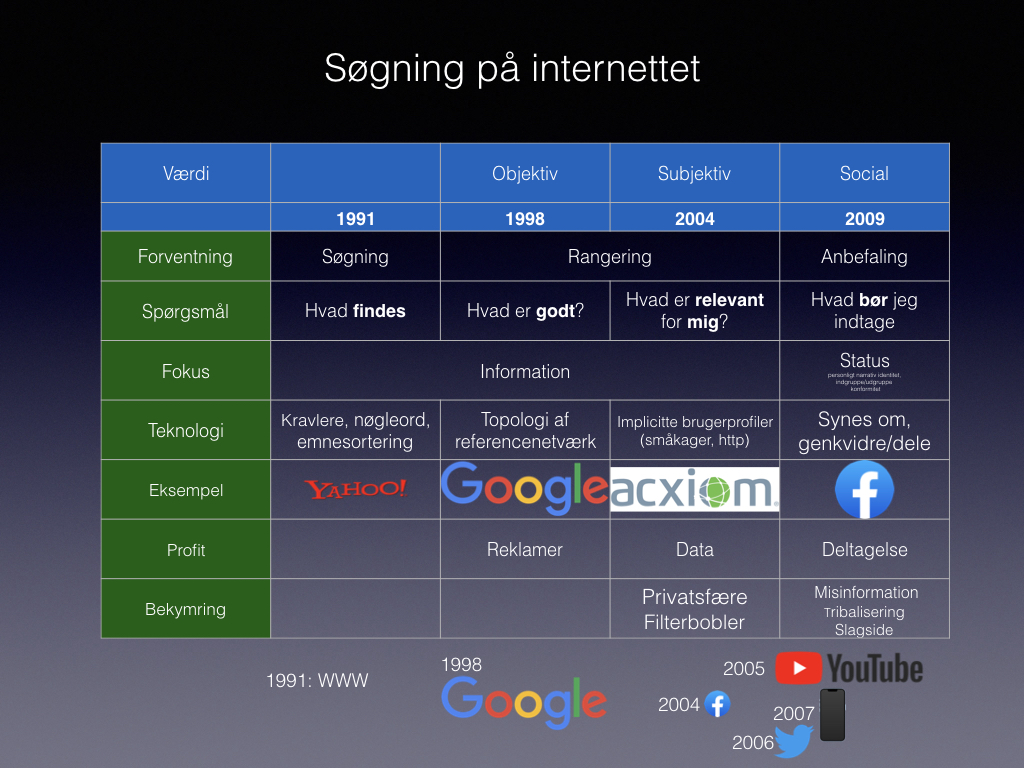

I am, however, quite happy with the historic overview slide that I created for this occasion:

Here’s an English translation:

| Objective | Subjective | Social | ||

| 1991 | 1998 | 2004 | 2009 | |

| What do we expect from the search engine? | Searching | Ranking | Ranking | Recommendation |

| What’s searching about? | Which information exists? | What has high quality? | What is relevant for me? | What ought I consume? |

| Fokus | Find information | Find information | Find information | Maintain my status, prevent or curate information |

| Core technology to be explained | Crawlers, keyword search, categories | Reference network topology | Implicit user profiles, nearest neighbour search, cookies | Like, retweet |

| Example company | Yahoo | Acxiom | Twitter, Facebook | |

| Source of profit | Advertising | User data | Attention, interaction | |

| Worry narrative | Privacy, filter bubbles | Misinformation, tribalism, bias |

Particularly cute is the row about “what is to be explained”. I’ve given talks on “how searching on the internet works” regularly since the 90s. and it’s interesting that the content and focus of what people care about and what people worry about (for various interpretations of “people”) changes so much.

- Here is the let 2000s version. How google works (in Danish). Really nice production values. I still have hair. Google is about network topology, finding stuff, and the quality measure is objectively defined by network topology and the Pagerank algorithm.

- In 2011, I was instrumental in placing the filter bubble narrative in Danish and Swedish media (Weekendavisen, Svenska dagbladet.) Suddenly it’s about subjective information targeting. I gave a lot of popular science talks about filter bubble. The algorithmic aspect is mainly about clustering.

- Today, most attention is about curation and manipulation, and the algorithmic aspect is different again.

I briefly spoke about digital education (digital dannelse), social media, and desinformation, but it’s complicated. A good part of this was informed by Hugo Mercier’s Not Born Yesterday and Jonathan Rauch’s The Constitution of Knowledge, which (I think) get this right.

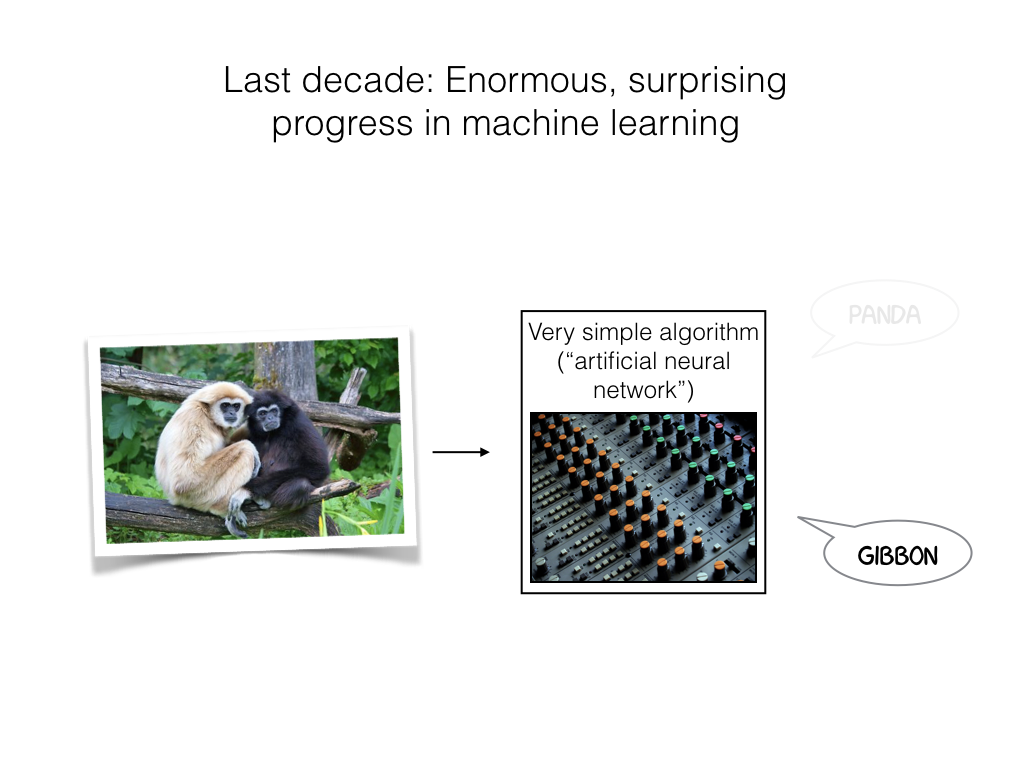

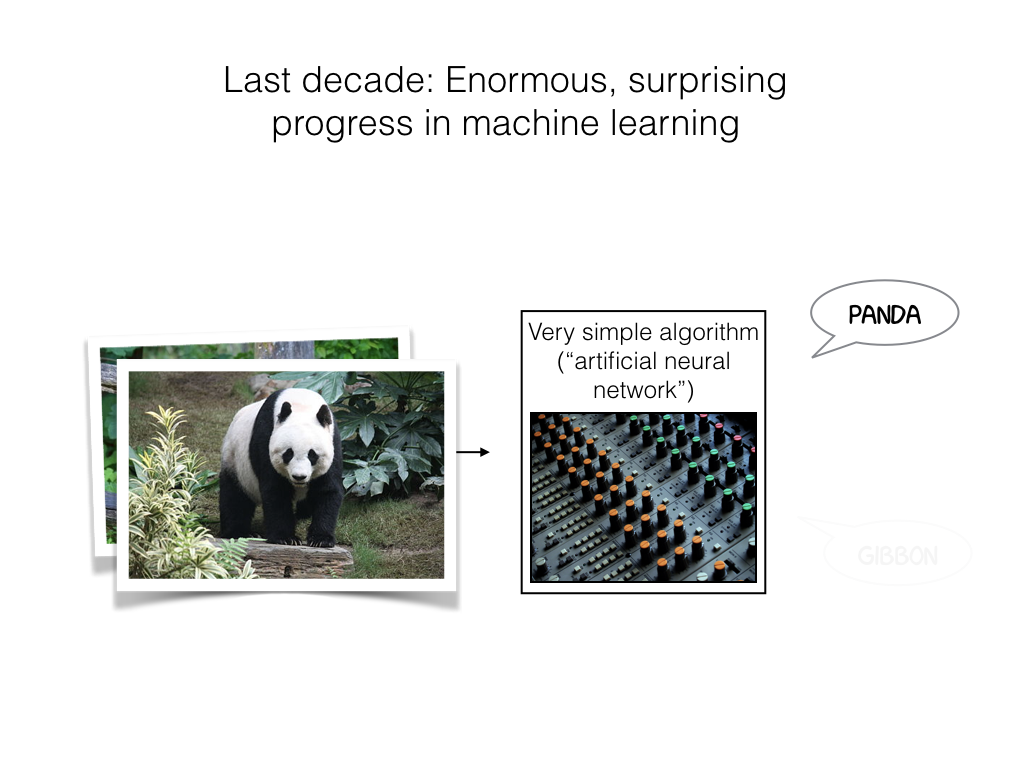

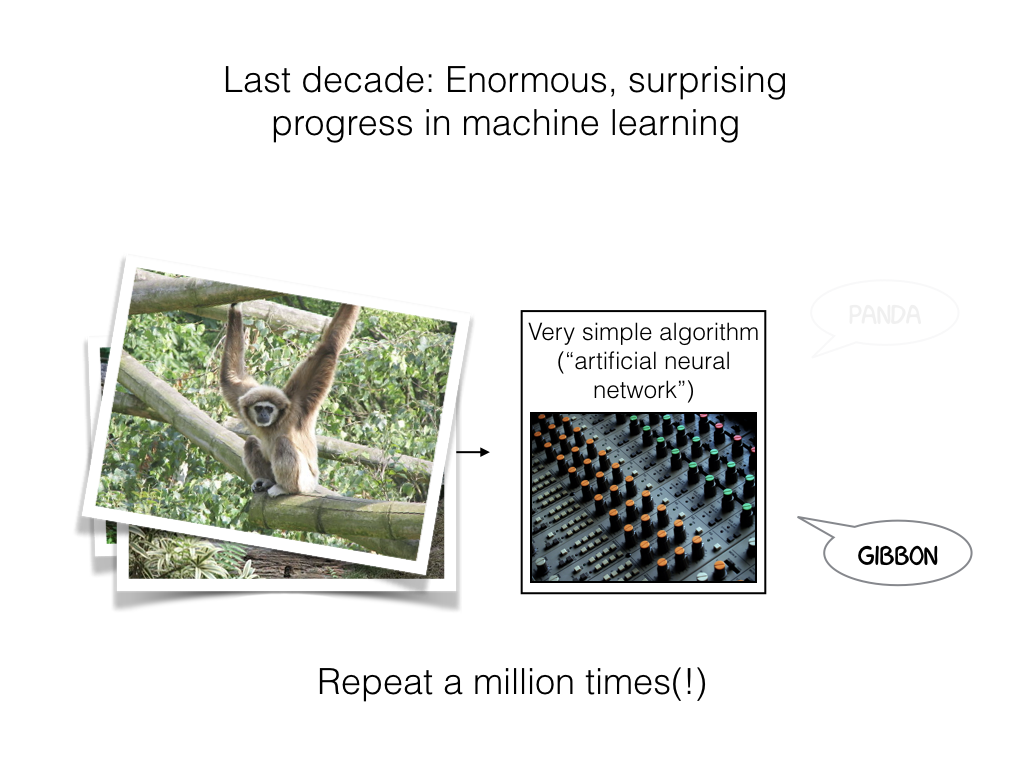

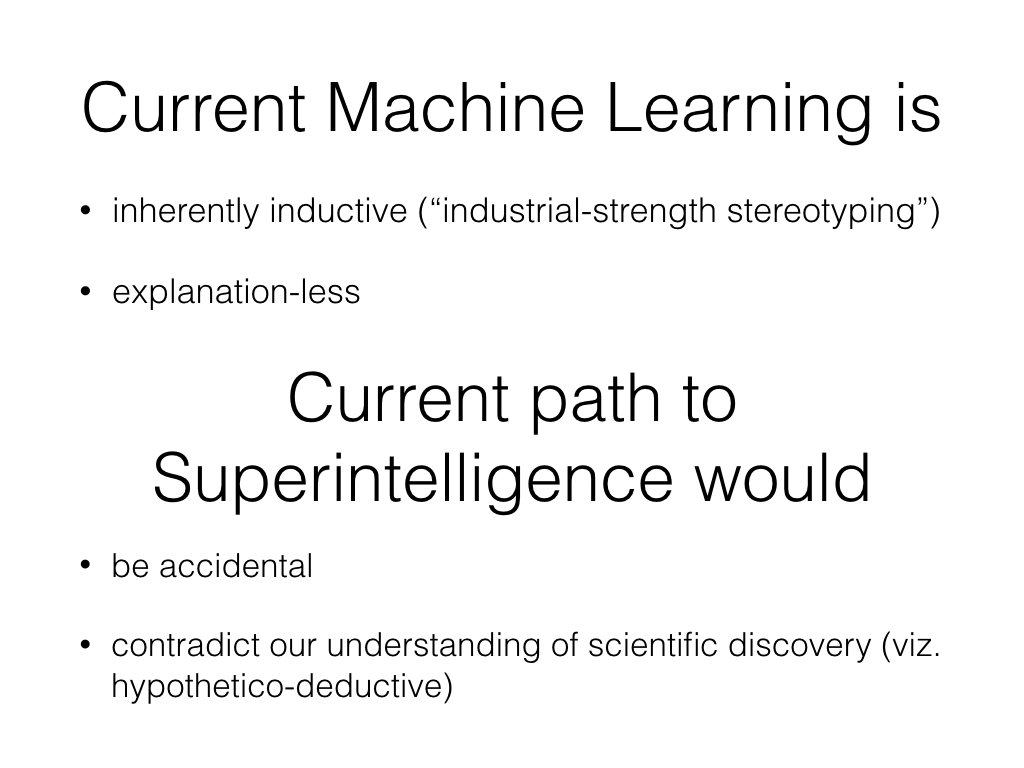

The bulk of the presentation was based on somewhat tailored versions of my current Algorithms, explained talk, and an introduction to various notions of group fairness in algorithmic fairness, which can be found elsewhere in slightly different form.

There was a lot of room for audience interaction, which was really satisfying.