Ah, the joys of editing Wikipedia.

Two days ago, I stumbled over interesting news on the web that a German high schooler, Shouryya Ray, had solved a physics problems posed by Newton that had baffled scientists for centuries. These things interest me, so I read the largely uninformative article and began hunting for more information in the amazingly chaotic font of wisdom that is the internet. What was the problem? Why had it been difficult? What was the solution?

The Wikipedia entry, normally the trusted source for that kind of question, repeated the rather vague descriptions found on the media articles. Mostly human interest stuff about Shouryya Ray’s background, early life, ambitions, and views on mathematics. All nicely sourced to the same media articles, which were all obviously copied from each other, with slight increases in hyperbole. By now, (Monday 29 May) the story has reached the so-called Science and Technology web section of Danish tabloid Ekstrabladet. It has not improved.

What did he do?

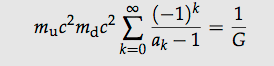

A thread at Reddit/mathmatics and a thread at StackExchange mathematics seem to have mostly deduced what Shouryya Ray’s result is over the past few days. A pretty neat (implicit) solution to a differential equation for calculating the trajectory of a particle under certain conditions. Follow the links if you’re interested. This is awesome for a 16-year old high school student, far above what I could have done at that age. Clearly university-level calculus.

He submitted this solution to the annual German youth science contest Jugend forscht, won his regional finals for Saxony, and placed 2nd in the national finals for the category Mathematics and Computer Science. Well done.

What the media got out of it

The source of the rampant media story that followed, as far as my Google skills get me, is an article in the online version of Welt (a normally reputable German paper): 16-jähriges Mathegenie löst uraltes Zahlenrätsel. This appears under Vermischtes, the Misc/Human Interest section of the paper. This article is actually still modest on the hyperbole, only using the formulation that the original problem was formulated by Newton (which, as far as I understand, is true) and “seither ungezählte Mathematiker zur Verzweiflung gebracht haben dürfte”. This translates to “should have brought untold numbers of mathematicians to despair”. Note the conjunctive case dürfte. This is carefully expressed and factually correct: the numbers of mathematicians confounded by the resulting differential equations are indeed ungezählt (uncounted, or untold).

The headline, probably set by somebody else than the journalist Céline Lauer is “16 year old mathematical genius solves ancient maths problem.” This is where the story goes off into lala-land and probably where the excitement starts.

Next stop: the story hits the anglosphere, possibly in the Daily Mail online. Schoolboy ‘genius’ solves puzzles posed by Sir Isaac Newton that have baffled mathematicians for 350 years. The conjunctive case, together with any restraint has gone the way of the Dodo. This is a good story, and nobody cares about the original project, it spreads like wildfire between nonmathematical people. Many of the online sources, those that permit comments, contain isolated genuine questions from interested readers about what the result actually was, but nobody gets any wiser.

Wikipedia

What to do? After some soul searching I did the Right Thing and put the Wikipedia article for Shouryya Ray (created three days ago, 27 May) on my watch list, whittling it down to correct, verifiable information. Wikipedia has very well thought out policies about these kinds of things, such as guidelines for Notability of Academics and People notable for only one event.

Of course, once you start doing that, you need to see it through. I’ve been reverting edits from decent, intelligent, friendly, well-intended contributors ever since. All they want is to tell the world about this young genius. And I revert them. It puts me in a foul mood.

At least currently, the page is correct. (Who knows what happened while I typed this.) The comment thread at the Ekstrabladet article, Teenager løste 350 år gammel Newton-gåde, gives me some consolation:

Commenter: that [newspaper] article didn’t tell me much….

Commenter: and why does it say on wikipedia that he got 2nd place???

Commenter: Wikipedia knows EVERYTHING!

[…]

Commenter: Wikipedia does know a bit more than Ekstra-bladet, wouldn’t you agree on that?

What now?

We’ll see where this ends. What I’m worried about is how this may taint the reputation of Shouryya Ray. He did absolutely nothing wrong. He’s clearly very bright and has a valuable career as an academic in front of him. (We can still hope he switches to Theoretical Computer Science, can’t we?)

He fell victim to wanton publicity, to scientifically illiterate journalists, and people who decide what’s newsworthy with their heart instead of their brain. I dearly wish this does not hurt or discourage him.

We need more people like him. And we need fewer bad journalists. And we need more people like the nerds at Reddit. Another good day for internet forums, another bad day for online versions of old media.

Now back to the life-killing task of reverting Wikipedia edits.

Update (29 May, 19:16)

A journalist worth his salt actually wrote a good description of this: 16-year-old’s equations set off buzz over 325-year-old physics puzzler. Alan Boyle at MSNBC. He actually contacted physicists, all of which are naturally reluctant to comment on this. He cites the Reddit thread, too! Good job, Alan. More of that.

Alas, my fears for Shouryya Ray and Jugend forscht are quite valid:

But a falling body with air resistance (however modeled) is hardly a ‘fundamental unsolved problem,’ as [Ray] seems to think.

These comments, and similar ones pop up all over the net now. As if Ray, or his teachers, or the jurors of Jugend forscht made any such hyperbolic claims. As if they were deluded and didn’t do their job right.

But I can’t find any questionable claims from Ray or his teachers or jurors anywhere, on the contrary. In the interviews, Ray is consistently described as very modest. The rot sets in with the Welt article described above, with the dürfte and the sensationalist headline.

This is a story about journalists. Not about Ray, and not about trajectories.

Except for the downward trajectory of the Daily Mail, maybe. Cheap shot, I know.